Vercel's April 2026 Incident Is a Textbook NHI Problem: What to Rotate and Why

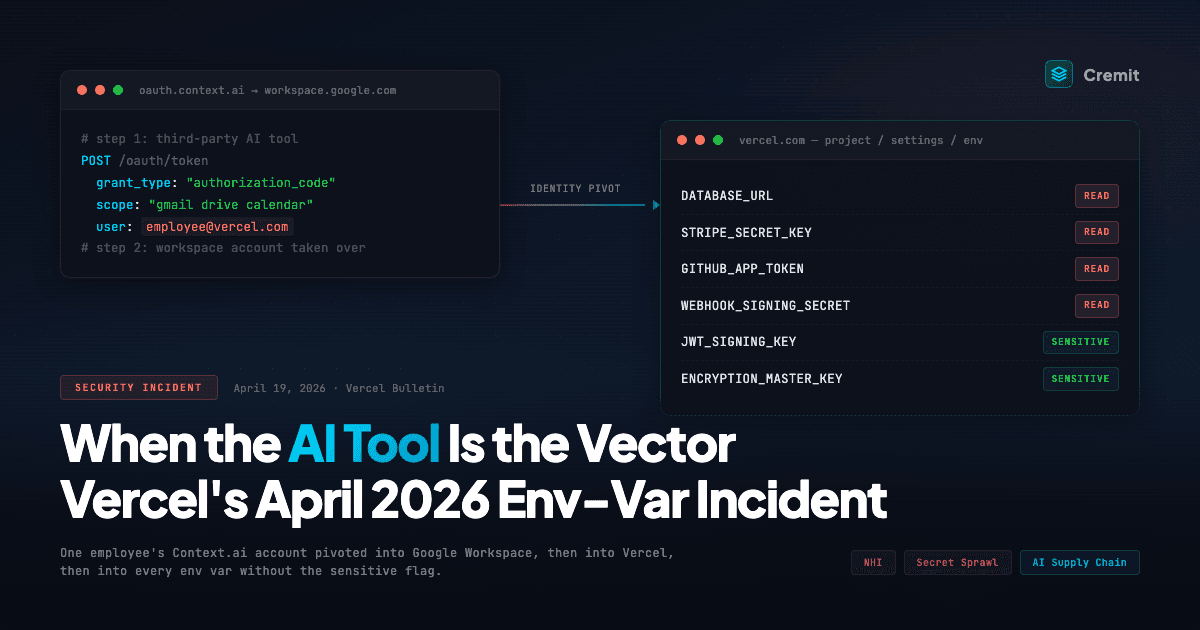

Vercel confirmed an unauthorized-access incident on April 19, 2026 that started in a third-party AI tool, pivoted through Google Workspace, and reached environment variables in a subset of customer projects. The exposure surface is every env var that was not marked sensitive. Here is what is confirmed, what is noise, and what to rotate first.

On this page(10)

Table of Contents

A breach that happened somewhere else

On April 19, 2026, Vercel put out a security bulletin confirming unauthorized access to some internal systems and, with it, access to environment variables in a subset of customer projects. The bulletin is direct about the path. The incident did not start at Vercel. It started in a third-party AI tool used by a Vercel employee, which let the attacker take over that employee's Google Workspace account, which in turn let them reach Vercel's internal systems.

That is what actually matters. Everything else circulating this weekend, the ShinyHunters branding, the BreachForums-dot-ai post, the alleged two million dollar ransom screenshot, the employee-panel theory, sits somewhere between unverified and contested. We will come back to it.

For most teams reading this, "was Vercel breached" is not the useful question. Vercel confirmed that. The useful question is which of your own credentials were sitting in a Vercel environment variable without the sensitive flag, because those are now untrusted.

This post walks through what is confirmed, how the path got in, why the sensitive flag is the single thing that decides how bad this is for you, how it maps to the Non-Human Identity Kill Chain, and what to rotate, in what order, right now. If you run a Vercel project in production and you cannot immediately say which of your env vars are sensitive, skip to the rotation playbook and come back.

Confirmed facts, and what is still noise

Separating the two is worth doing before you rotate anything, because decisions belong to the first list, not the second.

Confirmed by Vercel's bulletin (April 19–20, 2026):

- Unauthorized access to certain Vercel internal systems did occur.

- The access originated from the compromise of Context.ai, a third-party AI tool used by a Vercel employee.

- From Context.ai, the attacker took over the employee's Google Workspace account.

- Some customer environment variables that were not marked as "sensitive" were accessed.

- Vercel has no evidence that values stored as Sensitive Environment Variables were accessed.

- A limited subset of customers is affected, and Vercel is contacting them individually.

- Recommended actions include rotating non-sensitive environment variables, enabling the sensitive flag going forward, reviewing activity logs, hardening Deployment Protection, and rotating Deployment Protection tokens.

Still unverified as of writing:

- An account using the "ShinyHunters" handle on BreachForums-dot-ai initially posted about the incident. Whether that account is actually the ShinyHunters extortion group is contested. Messages attributed to ShinyHunters on Telegram deny involvement and claim the account is an impersonator.

- BreachForums-dot-ai itself is contested ground. There have been multiple iterations of BreachForums and RaidForums across takedowns and ownership changes, and which instance is "real" right now is part of the argument.

- Screenshots of a two million dollar ransom demand have circulated. Authenticity is not established.

- A theory that the initial vector was an employee panel compromise is being floated. That could just as easily be a screenshot taken after lateral or vertical movement from the Google Workspace access. The initial vector beyond Context.ai is not detailed publicly.

- "Limited subset" has not been quantified. Vercel has many customers. "Small" can be a lot of projects in absolute terms.

The first list is your decision surface. The second list is worth watching but not worth waiting on. Attackers cash out before the story settles.

The attack path we do know

The path Vercel described is short, but spelling it out is useful because it tells you who actually has to change behavior.

First, Context.ai was compromised. Context.ai is an AI product used by a Vercel employee, sitting inside that employee's personal productivity workflow. Whatever access that tool held into the employee's Google Workspace was, in effect, access the attacker held once Context.ai fell.

Then the attacker used that access to take over the employee's Google Workspace account. This is where an external breach turned into an internal-to-Vercel one. From here on, the attacker was inside an identity that Vercel's internal systems trust.

With that identity, the attacker reached Vercel's internal systems and, from there, customer environment variables stored without the sensitive flag.

A couple of things about this chain are worth calling out. The attack never crossed a Vercel product boundary in the usual sense. It crossed an identity boundary. And the identity it crossed was a human one that had adopted a third-party AI tool. Same pattern we wrote about with MoltBot and with the Cline/Clinejection supply-chain work: a productivity AI agent becomes a pivot into systems it was never explicitly granted access to, because it inherits the access of the human using it.

If your organization has people using AI tools that touch their work email, calendar, documents, or identity provider, this is the shape of exposure you should be thinking about, regardless of whether you run anything on Vercel.

Why "sensitive" is the word doing all the work

Vercel has two ways to store an environment variable. They look identical in the dashboard. They behave very differently during an incident.

A standard environment variable is stored so that its value can be read back. It has to be: builds, serverless functions, and the dashboard all need the string. This is how environment variables work on most platforms, and it is the default when you add one on Vercel.

A Sensitive Environment Variable is encrypted at rest, in a form where the value cannot be read back. Not by the dashboard, not by a Vercel admin, and not by someone who has gotten internal access to Vercel's systems. The value is injected into the running build or function at execution time and is not retrievable after that.

The thing to take away from this incident is that the attacker reached non-sensitive environment variables. Vercel says it has no evidence that sensitive environment variables were accessed, and given how sensitive values are stored, that claim is consistent with platform design, not a hopeful assumption.

For most teams, the painful part of reading that is realizing how many secrets are sitting on the non-sensitive side. Not because someone decided they shouldn't be sensitive, but because the sensitive flag isn't the default and nobody went back to toggle it. Same reason SSRF reaches cloud metadata on most cloud networks: not a design failure, a default that worked well enough that nobody revisited it.

So the rotation call isn't subtle. If a credential in one of your Vercel projects wasn't marked sensitive, treat it as exposed. Rotate it. Whether your specific project was on Vercel's contacted list is a question for incident reporting, not rotation.

For context, we published a piece on Vercel environment variable exposure exactly a year ago, in April 2025: Vercel Environment Variables Best Practices (With Real Cases). The angle there was different. It covered NEXT_PUBLIC_ misuse that quietly ships server-only secrets into the client bundle, and the rate at which live secrets actually turn up in public Vercel deployments. That was a developer-layer story. This weekend's incident is the same underlying premise, "Vercel environment variables are effectively an NHI store," confirmed at the platform layer.

This is the NHI Kill Chain, not a Vercel-specific story

We have been running a series on Non-Human Identity security patterns, the Kill Chain. Four of those patterns show up inside this one incident.

Over-shared Key. A single API key or database credential lives in a Vercel project's environment variables, in the CI pipeline, in a staging environment, and in a local development container on someone's laptop. Rotating it in one place does not rotate it in the others. The damage reaches every surface that holds a copy of that string, not just the project you rotated. We walked through this in the Over-shared Key post. If that is the dominant shape of your secrets, this incident is going to be a long rotation cycle, not a short one.

Out of Scope Loophole. Your secret manager inventory lists what is in the secret manager. Environment variables in a Vercel project, or in a third-party PaaS more generally, frequently are not listed there. They were added by a developer at midnight to unblock a deploy. The rotation tooling does not reach them. The security team does not know they exist. We described this pattern in the Out of Scope Loophole post, and it is the single biggest reason this week is going to be worse than it needs to be for a lot of teams.

Aged Key. Long-lived API keys with no rotation schedule stretch the useful lifetime of anything that leaks. A value that has been sitting in a Vercel env var since 2023 is not one leaked credential. It is whatever that credential can reach, for the entire period until you notice. Aged Key covered how time quietly multiplies the damage.

Ghost Key. Environment variables left in projects for services nobody uses anymore. The service is gone. The key still authenticates. Attackers do not care that your team decommissioned the integration.

If the Kill Chain series read a bit abstract, this is the concrete version. A platform incident is the forcing function that turns these four latent problems into a same-week rotation job.

The noise, briefly

The dark-forum chatter we put off earlier. Here is the honest read.

An account using the ShinyHunters handle posted about the Vercel incident on BreachForums-dot-ai. Meanwhile, Telegram messages attributed to the ShinyHunters group have denied involvement and called the forum account an impersonator. Neither claim is currently verifiable from outside. Whether BreachForums-dot-ai is the "real" successor to previous BreachForums instances is itself disputed, which is what you would expect given the takedown-and-rebrand history of that ecosystem. Screenshots of an alleged two million dollar ransom demand are also making the rounds, unauthenticated.

None of this changes what you should do. "Was it ShinyHunters" is an attribution question that researchers and law enforcement will chew on for weeks. "Should I rotate my non-sensitive environment variables" has the same answer no matter which threat actor ends up holding the data.

This section is here so it is clear we have seen the noise. Rotate off the confirmed facts.

Rotation playbook

Work in this order. Biggest reach first, then surface area, then hygiene. Do not audit before rotating. Rotate the production-reach values first and run the audit in parallel.

T+0, today.

- In every Vercel project you own, list environment variables that are not marked Sensitive. If you have many projects, script this via Vercel's API rather than clicking through the dashboard.

- Rotate, in this order:

- Production database credentials and any payment-processor keys.

- Third-party API keys with write scope (cloud providers, email senders, storage buckets, code hosts, messaging platforms).

- Signing keys, JWT signing secrets, webhook signing secrets.

- OAuth client secrets for production integrations.

- For each rotation, update every downstream system that holds a copy of the same value. This is the Over-shared Key failure mode and it is where incidents get extended.

T+0 to T+1.

- Enable the Sensitive flag for the replacement values as you add them. Do not skip this step. Re-deploying with the replacement already stored as Sensitive is what closes the loop for the next incident.

- Set Deployment Protection to Standard at minimum for any public or production environment. Rotate Deployment Protection tokens that existed during the incident window.

- Pull activity logs for the incident window (Vercel's bulletin includes the period you care about). Look for unexpected deployments, unexpected environment variable reads, and unexpected team membership or token changes. You are looking for anomalies, not proof.

T+2 to T+7.

- Audit downstream systems for stale references to the old values. CI runners, preview environments, forks of your repos, internal developer tooling, ops runbooks, one-off scripts. This is where Out of Scope Loophole hurts. The rotated value is still a valid credential anywhere it was copied.

- For any read-only API keys that did not make the T+0 list, rotate them now. Read-only is a relative term. It often includes exfiltration-scoped access that you do not want in hostile hands.

Ongoing.

- Monitor for the rotated values reappearing anywhere public: GitHub commits, gists, forks, paste sites, container images, build logs. The test of "was this rotation thorough" is whether the old value surfaces publicly in the next 90 days.

- Revisit your policy: which classes of secret require the Sensitive flag by default. For most teams, the answer should be "any value that would require a rotation response if leaked," which in practice is most of them.

If you do nothing else from this list, do step 1 through step 4.

The pattern is bigger than Vercel

We have now written about three incidents with the same shape: a productivity-layer AI tool, adopted by someone inside a company, ends up holding or able to reach credentials that were never meant to be exposed to it. MoltBot, the Cline/Clinejection research, and now Context.ai.

The common thread isn't that AI tools are uniquely dangerous. It's that they stretch the surface area of an employee's identity faster than security reviews keep up, and most of that stretching happens inside personal productivity accounts that the security team has limited visibility into. The moment an employee grants an AI tool read or write access to their Workspace, inbox, or code, that tool inherits every credential reachable from that account. And that inheritance is just as useful to an attacker as it is to the employee.

We covered this framing in "AI Agents Are Creating NHIs at Scale." The Vercel incident this weekend is what that abstract argument looks like when it actually runs.

What Cremit can and cannot see

The NHI space is full of vendor claims that do not survive first contact with a real incident. So let us split this into what we cannot do, what we do today, and what is on the roadmap.

Right now, we do not have visibility into Vercel's internal environment variable store itself, and nobody outside Vercel does. If your question is "did my specific key actually get read from my Vercel project," that answer is not coming from Cremit, and it is not coming from any external scanner.

What we do today is the other side of the problem. Argus detects exposed secrets in code, forks, CI logs, and public surfaces. Beyond that, we are actively wiring Argus into secret stores like HashiCorp Vault, AWS Secrets Manager, and GCP Secret Manager, so that "where each secret actually lives" becomes part of your inventory instead of something you reconstruct from memory at 2am. If the same value also sits in Vault, in a public repo, or in a leaked build log, we surface that connection on one screen.

On the roadmap, we are extending the same integration pattern to PaaS env-var stores in the Vercel shape. The point is to put the "env var in a third-party platform" case into the same inventory as Vault and code, over time. We are not committing to a date. We are committing to the direction.

This is where Over-shared Key hurts. It is also where most teams burn the week after an incident. Rotating in Vercel while the same value still lives in Vault, CI, a fork, an internal repo cloned to a personal GitHub account, a leaked container image, or a build log is not rotation. It is rotation-in-one-of-six-places. The inventory exists to stop that.

If that is useful to you right now, the product is at argus.cremit.io.

Rotate first, investigate later

The incident response window is the rotation window. Attribution, forum drama, and ransom screenshots are worth tracking, but not on the critical path. The critical path is this. Find the non-sensitive environment variables, rotate the ones with production reach, turn on the sensitive flag for the replacements, and then spend the next week cleaning up the copies that live outside Vercel.

If you take one thing from this post, take this. The default in most platforms is "readable." Rotation tooling rarely reaches where secrets actually sprawl. And the fastest way to ship a working app has always been "paste it in env vars." Incidents like this one are what slowly close that gap, one Sensitive flag at a time.

Prior Cremit research on Vercel:

- Vercel Environment Variables Best Practices: Preventing Secret Exposure (With Real Cases), the April 2025 developer-layer version of the same problem

Related reading from the Cremit NHI Kill Chain series:

- Over-shared Key: when one credential blows up multiple services

- Out of Scope Loophole: the secrets your inventory never sees

- Aged Key: how time quietly multiplies the damage

- Ghost Key: decommissioned services with still-valid credentials

- AI Agents Are Creating NHIs at Scale

- Viral AI Assistant MoltBot: Your API Keys Are Exposed on the Internet

- Clinejection: AI Supply Chain Attack Anatomy

Sources:

- Vercel Security Bulletin, April 2026 Security Incident: https://vercel.com/kb/bulletin/vercel-april-2026-security-incident

- Vercel Docs, Sensitive Environment Variables: https://vercel.com/docs/environment-variables/sensitive-environment-variables

- Vercel Docs, Deployment Protection: https://vercel.com/docs/deployment-protection

- GitGuardian, 2025 State of Secrets Sprawl Report: https://www.gitguardian.com/state-of-secrets-sprawl-report-2025

Get the next one in your inbox

Monthly NHI research brief from the Cremit team. One email, high signal.